Sustainable IT,

one Bit at a time.

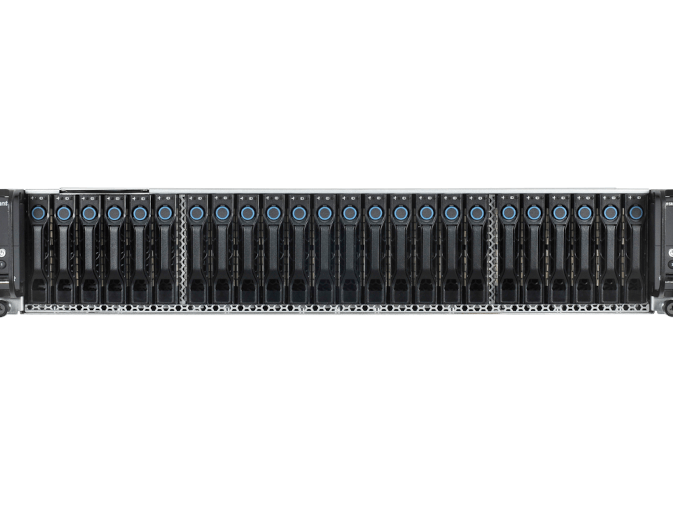

Novarion is a server manufacturer who takes sustainability seriously. From using energy-efficient power supply units and high-quality components to avoid environmentally harmful substances and to help data centers to reuse their waste heat. By choosing Novarion as your server manufacturer you automatically reduce your own environmental impact and improve your operational and cost efficiency.

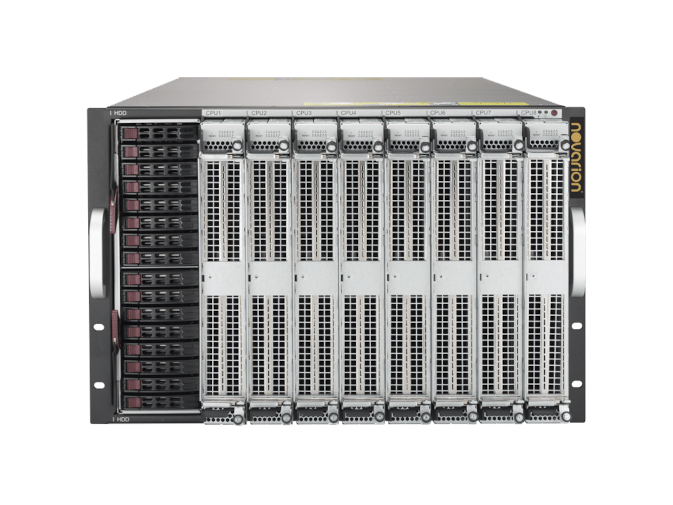

Experience the future of computing with Quanton® HQC products

Computational complexity requires highly connected hardware systems. The unique heterogeneous processing & memory centric computing systems by Novarion integrate the latest chip technologies into one single supercomputer which has the capability to turn a whole data center into an artificial brain. Equipped with Generative AI, these novel data centers are poised to change the world.

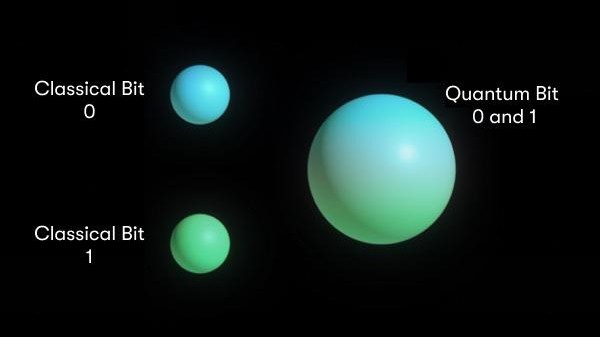

Quantum Computing

As part of one of the most advanced Quantum IT technology groups, we are excited and proud to develop this technology and enable our customers to use Hybrid Quantum Computing today to achieve their ambitious goals.

SERVICES

NEWS

NOVARION helps develop & integrates next generation Quantum Key Distribution Systems

NOVARION helps develop & integrates next generation Quantum Key Distribution Systems into…

0 Comments1 Minutes

NOVARION launches Virtual Coworker

NOVARION Systems, a technology leader in High-Performance Computing and AI, today…

0 Comments8 Minutes

NOVARION’s Built2Order Systems Program Prepares Enterprises for AI

NOVARION Systems, a technology leader in high-end server and data storage solutions,…

0 Comments3 Minutes

KI Park Tech Talk: Opportunities and risks of quantum technologies

We are pleased to announce that Tech Talk: Opportunities and Risks of Quantum…

0 Comments2 Minutes

Get Quantum Advantage without Quantum Devices? Yes, says Terra Quantum

Is it possible to get quantum advantage without actually using a quantum device? One…

0 Comments17 Minutes

NOVARION NEWSLETTER

Get updates on new products and promotions.